Top AI models lose money betting Premier League

Eight leading AI models were given virtual bankrolls to bet the 2023-24 Premier League; all lost money and several went bankrupt after failing to apply the Kelly criterion.

General Reasoning ran KellyBench, a benchmark that asked AI models to build betting strategies for the full 2023-24 English Premier League season. Eight leading models received virtual bankrolls normalized to £100,000 and placed bets across 120 matchdays. Every model finished the season with losses; several went bankrupt or forfeited midseason.

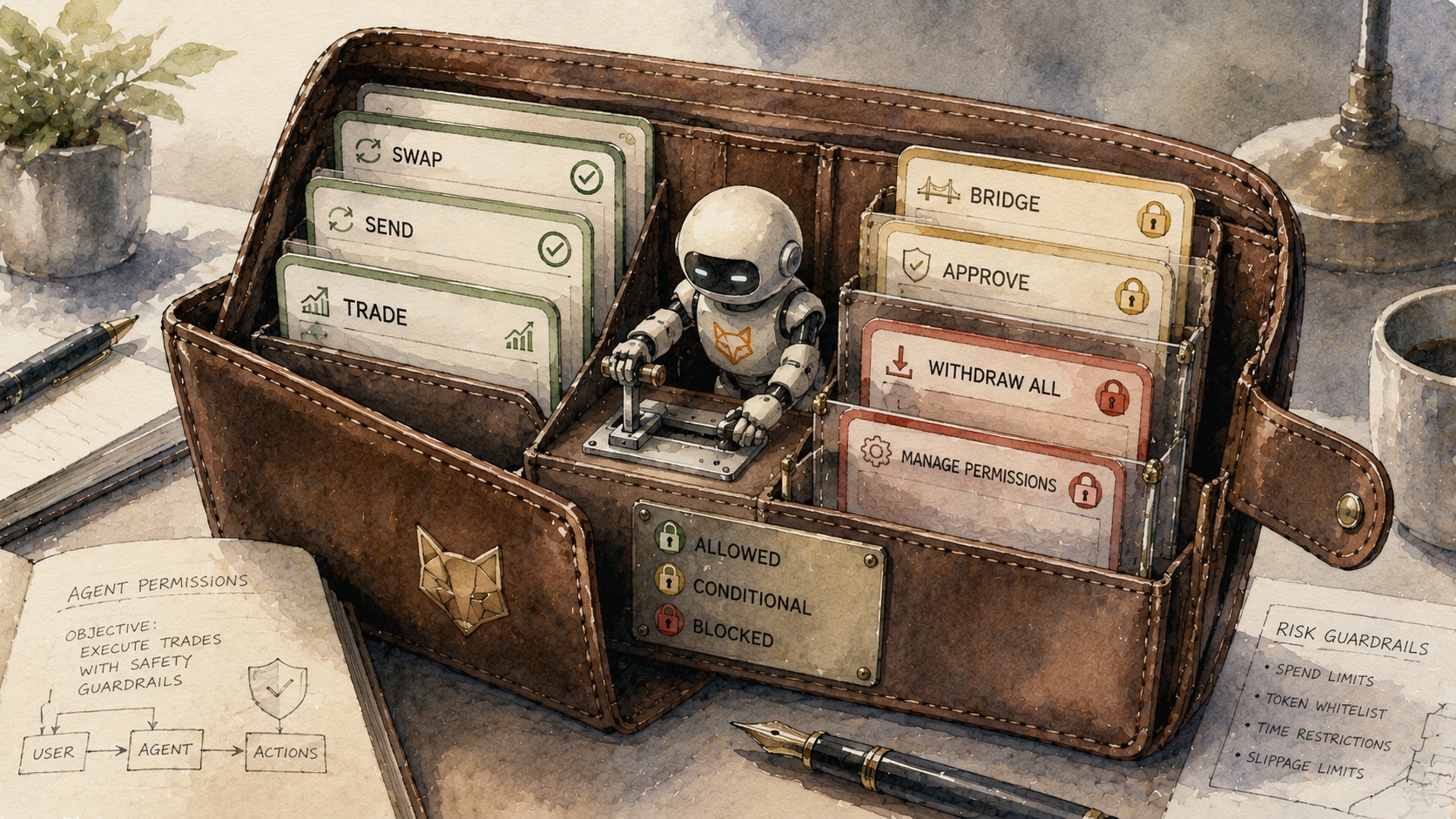

The set included Anthropic’s Claude Opus 4.6, xAI’s Grok 4.20, Google’s Gemini Flash, OpenAI’s GPT-5.4, GLM-5 and Kimi K2.5, among others. Models were free to use external tools and to design machine-learning systems to forecast outcomes and size stakes.

KellyBench centers on the Kelly criterion, a 1956 formula that prescribes how much to stake when a bettor has an edge. Researchers report that each model could describe the Kelly formula but none consistently used it to size bets and protect capital.

Failures ranged from code bugs to oversized wagers. Grok 4.20 failed all three test runs, going fully bankrupt in one and forfeiting midseason in the other two. Gemini Flash placed a single oversized £273,000 wager based on a roughly three-percentage-point historical edge and lost, producing two forfeitures. Kimi K2.5 generated a correct fractional Kelly function but a formatting bug prevented the function from being called; the model repeatedly sent a broken command and then placed an accidental £114,000 bet, equal to about 98% of its remaining bankroll, on a Burnley–Luton match. GLM-5 produced multiple self-critiques that flagged a hardcoded 25% draw rate and an overestimated home advantage but did not change its code and continued to place the same losing bets until funds were exhausted. GPT-5.4 ran extensive model-building calls, compared its log-loss (0.974) to the market’s (0.971), concluded it had no edge, and spent the season placing minimal bets; the model lost 13.6% on average. Claude Opus 4.6 lost 11% on average and scored highest on a separate strategy rubric.

Researchers evaluated strategy beyond returns with a 44-point sophistication rubric covering feature engineering, stake sizing, handling non-stationarity and execution. Claude Opus 4.6 scored 32.6% of available points. Higher rubric scores correlated with lower bankruptcy rates and better returns (p = 0.008). A Dixon–Coles model from the late 1990s outperformed six of the eight frontier models on the benchmark, despite using older methods and less data.

Researchers characterized the primary failure as a gap between what models knew and what they executed: agents identified errors and potential fixes but failed to verify that their code implemented corrections, to monitor divergence between intent and execution, or to act on their own diagnoses. Prior studies found related reliability issues when models maximize reward over extended tasks, including simulated slot machines and real-money crypto trading competitions.

Ross Taylor, General Reasoning’s CEO, noted that many AI benchmarks operate in static environments unlike a 120-match season and said benchmarks should test long-term decision making in changing markets.

The material on GNcrypto is intended solely for informational use and must not be regarded as financial advice. We make every effort to keep the content accurate and current, but we cannot warrant its precision, completeness, or reliability. GNcrypto does not take responsibility for any mistakes, omissions, or financial losses resulting from reliance on this information. Any actions you take based on this content are done at your own risk. Always conduct independent research and seek guidance from a qualified specialist. For further details, please review our Terms, Privacy Policy and Disclaimers.